April 17, 2020

LeapMind Announces Performance Estimations of Its AI Inference Accelerator IP at COOL Chips 23

PPA estimation performed in collaboration with a manufacturer specializing in the latest in AI chip layout design and construction

April 17, 2020 – Tokyo, Japan- LeapMind Incorporated (located in Shibuya-ku, Tokyo Japan, CEO: Soichi Matsuda), an industry leading provider of the business solutions using the core AI technology of deep learning, today announced the results of performance estimations conducted on their AI inference accelerator at the international computer technology symposium, COOL Chips 23, hosted by the IEEE. The silicon mounting using the ASIC design flow was carried out in cooperation with Alchip Technologies, Limited (Taipei, Taiwan, CEO: Johnny Shen).

COOL Chips 23 Presentation Contents

2012 was a breakthrough year for both deep learning technology and LeapMind, who since this time have been a central driving force of AI related projects at over 150 companies developing and implementing edge devices (terminals) that are not affected by their network environment in terms of power, cost, latency, security, etc

Since deep learning technology generally requires a significant amount of computing resources, its practical applications in edge devices is affected by the following issues regarding power, accuracy, and speed:

- (1)Power: Power must be saved owing to the limited supply of power, e.g., battery drive being limited or heat radiation being limited by casing size.

- (2)Accuracy: The technology must be lightweight so that the memory mounted on the edge device can process data while maintaining trained AI model accuracy.

- (3)Speed: Inference processing must be completed within a certain period of time because there is a control target that awaits and uses the inference results.

To address these issues, we are working on the development of a core technology called “extremely quantization technology” that operates at both software and hardware levels with a network optimized for practical applications and a dedicated compiler.

The results of these technological developments thus far were presented in the form of performance estimation values. These performance estimations confirmed that our technology can be expected to be effective not only for FPGA devices, the typical target of development, but also for ASIC technology. In further developing this technology, we hope to introduce custom devices with AI functions to the market.

Performance Estimation Description and Results

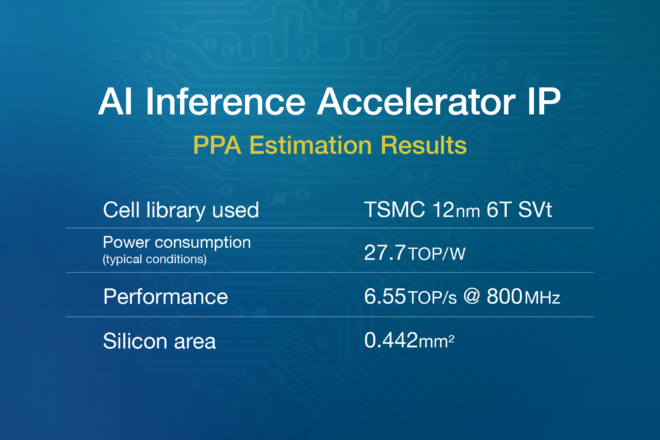

The performance estimation for ASIC technology was conducted with the cooperation of Alchip. When the PPA (Power, Performance, and Area) estimations were conducted (see Note), which are indices for performance when mounted on silicon, Alchip, along with LeapMind, examined and conducted a series of layout trials on multiple memory configurations. The results of the conducted power simulations, which followed the set layout and wiring, confirmed that the PPA predicted by LeapMind could be achieved.

Applications

Based on the results of these performance estimations, LeapMind will continue its development of technology for ultra-low power AI inference accelerator circuits, including work on improvements in performance and power efficiency. Furthermore, considering that these performance estimations did not use any special cell library or memory, nor any power-saving methods of implementation, there is still room for improving power efficiency at the implementation stage.

For example, by adopting design techniques from our collaborating partner, Alchip, a company that specializes in state-of-the-art layout designing and manufacturing of AI/HPC chips, not only both static and dynamic power consumptions can be predicted and reduced, but also further reduction of power by using clock gating techniques and an effective division of area to account for multiple power supply voltages are possible.

Moreover, since the accelerator can be adapted for feature re-extraction for use under ultra-low voltage conditions, we believe that even greater performance improvements can be expected in actual use with ASIC technology.

About LeapMind Incorporated

Founded in 2012 with the company philosophy of “promoting new devices using machine learning technologies far and wide,” LeapMind Inc. has procured 4.99 billion yen and specializes in extremely low bit quantization technology that makes deep learning compact. The company’s products are employed in more than 150 businesses primarily in manufacturing, in industries such as the automotive industry. LeapMind Inc. also develops semiconductor IP based on the company’s expertise in software and hardware.

Head Office: 150-0044 3F, Shibuya Dogenzaka Sky Bldg, 28-1 Maruyama-cho, Shibuya-ku, Tokyo, Japan Representative: Soichi Matsuda, CEO Established: December 2012 URL:https://leapmind.io/en/

About Alchip Technologies, Limited

Alchip Technologies Ltd, headquartered in Taipei, Taiwan, is a global leading provider of silicon design and production services for system companies developing complex and high-volume ASICs and SoCs. Alchip was founded by semiconductor veterans from Silicon Valley and Japan in 2003 and provides faster time-to-market and cost-effective solutions for SoC design at mainstream and advanced, including 7nm processes. Customers include global leaders in AI, HPC/supercomputer, mobile phones, entertainment device, networking equipment and other electronic products. Alchip is listed on the Taiwan Stock Exchange (TWSE: 3661).

Head Office: 9F., No.12, Wenhu St., Neihu Dist., Taipei, Taiwan 114 Representative: Johnny Shen, CEO Established: February 2003 URL:http://www.alchip.com

Media Contact LeapMind Inc. Ms. Takai, PR/Branding Division TEL: 03-6696-6267 EMAIL:pr@leapmind.io