To accelerate next-generation of AI capabilities through faster and simpler AI computation

Pioneering the practical integration of AI, we offer advanced computing power to business around the world

View Details

The new standard in AI computing

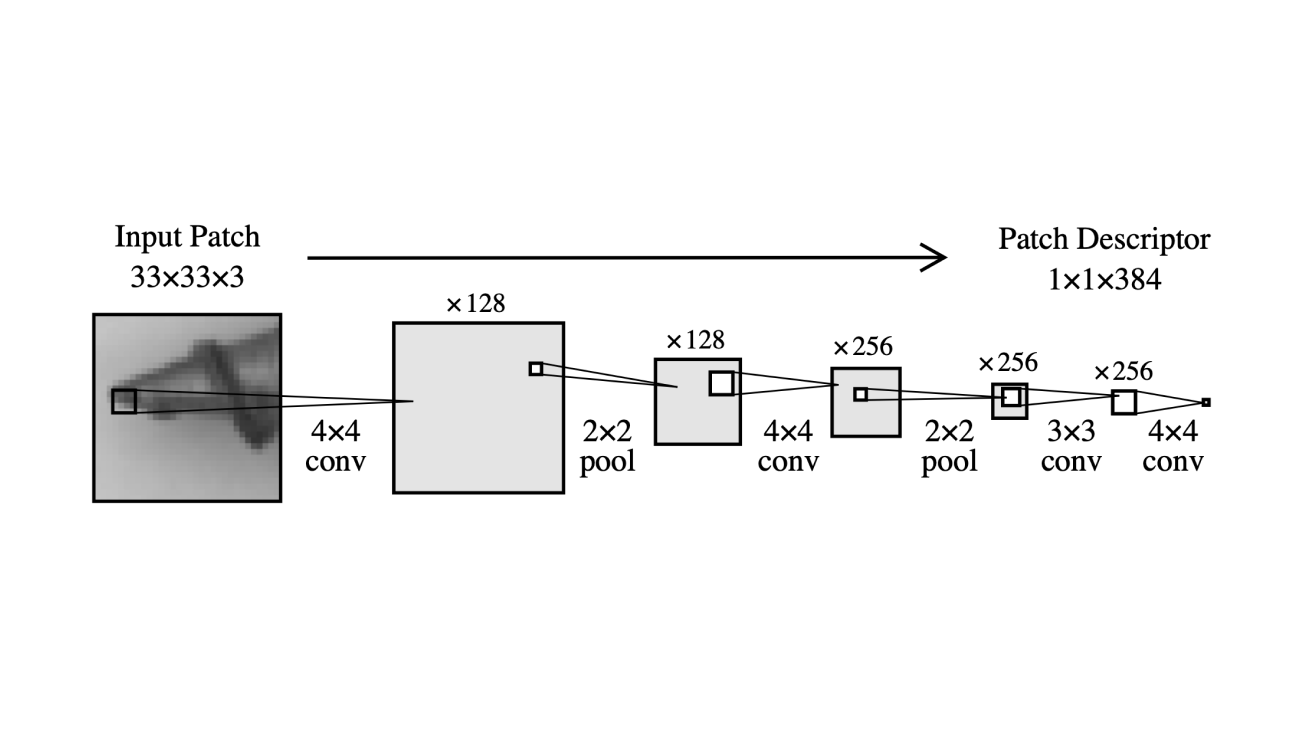

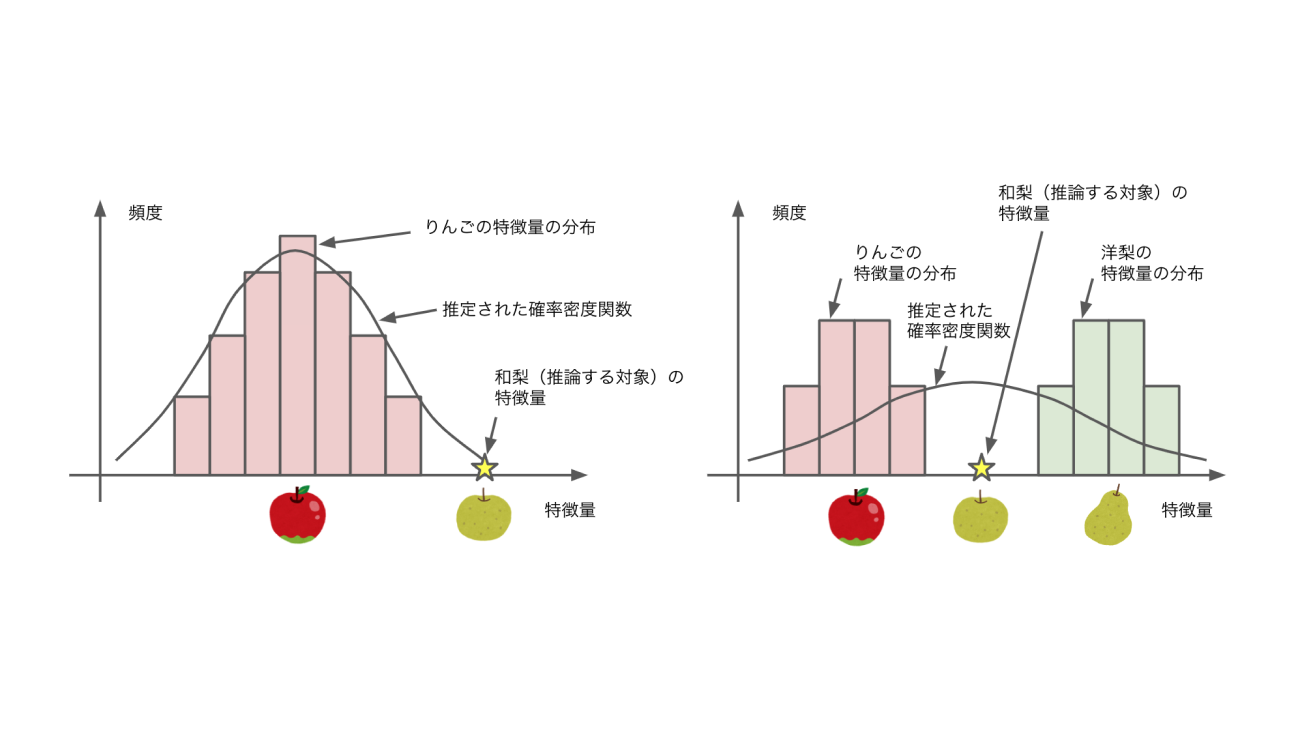

As AI model sizes, including large-scale language models (LLMs), have grown enormously, they require ever-larger computational processing. However, due to soaring semiconductor prices, supply shortages, and stagnant performance improvements caused by the challenge of semiconductor process technology, we are seeing many companies struggling to secure sufficient computing power to accelerate AI-driven business. To resolve these issues, we, LeapMind, provide both the hardware and software solution as a one-stop service needed to bring AI into society.

CLIENTS

NEWS

October 10, 2023

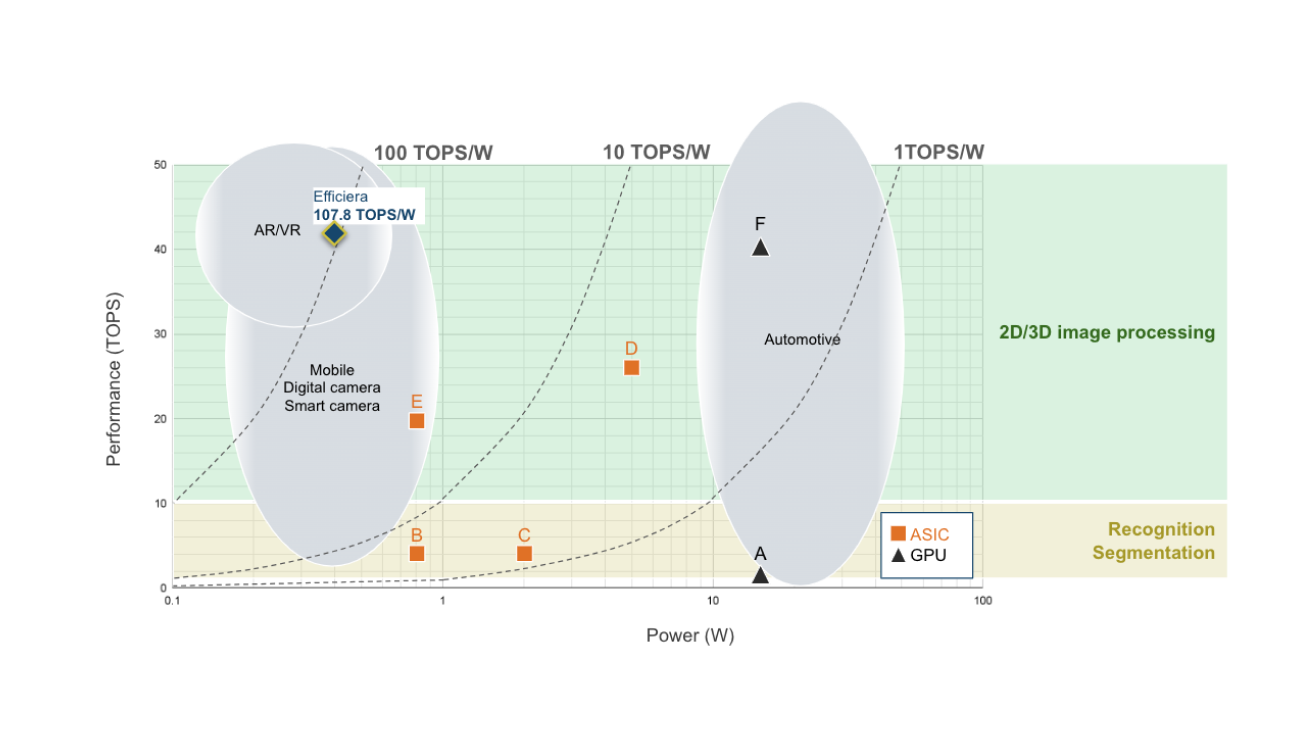

LeapMind's New AI Chip Paves the Way for Unprecedented Cost-Effective AI Computing

August 1, 2023

LeapMind’s Ultra Low-Power AI accelerator IP “Efficiera” Achieved industry-leading power efficiency of 107.8 TOPS/W

January 31, 2023

【Press release】Yoshitaka Ushiku appointed as Technical Advisor